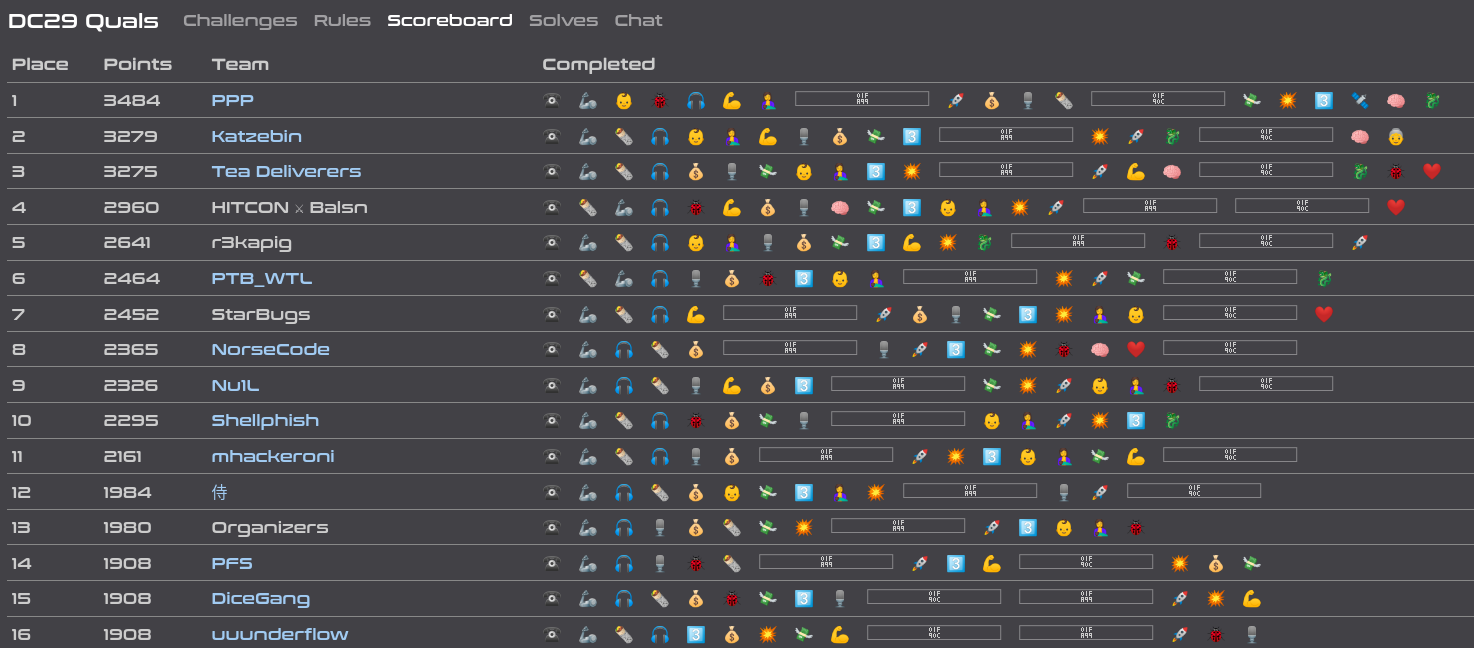

DEF CON CTF Quals 2021: Day 2

This is the summary for me on the second day I played DEF CON CTF. The commentary for day 1 is available here. In this blog post, only the solutions for qoo-or-ooo, back-to-qoo and pooow-buddy are written. Since there are a lot of new stuff, I may not be able to explain them well. Please bear with me...

Challenge: qoo-or-ooo & back-to-qoo⌗

qoo-or-ooo (58 solves, 120 points)

This is another QOO's challenge. Wait, is this QOO or OOO?

qoo-or-ooo.challenges.ooo 5000Files: service.py, backend.py, coin.py, game.py, players.py

Part I: Getting started⌗

⏲️ ⏲️ Day 2, 04:56 (1256 minutes in) ⏲️ ⏲️

OOO decided to release a crypto challenge shortly after I am asleep. While I am dreaming, Poignant Pineapple and p_nack managed to beat the game by luck and shared the complete transcript of the communication. They noticed that the problem is solvable by brute-forcing. Unfortunately, there is an implementation flaw on their solution script and they are unable to retrieve the flag at the moment.

⏲️ ⏲️ Day 2, 08:50 (1490 minutes in) ⏲️ ⏲️

There are already 17 solves when I am awake... Well, I understand the hunger of the crypto guys during DEF CON. Since p_nack has already read the challenge while I am asleep, he introduced me the challenge.

We will be playing a game involving four players: $P_1$ (ourselves), $P_2$ (Zardus), $C_1$ and $C_2$ (two random guys). We will be teaming up with Zardus competing with $C_1$ and $C_2$. There are 30 rounds in a game and in each round, each of us will toss a coin. If $P_1 \oplus P_2 = C_1 \times C_2$, then we win the round. The objective is to win over 85% of the games, i.e., at least 26 rounds.

If we win, we will receive the flag. It is encrypted with a key derived from a subsequence of $P_2$'s when we are playing the game. That said, the key is no longer than 30 bits and it is easy to brute force.

We are able to know $C_1$ before we decide the value of $P_1$. That said, we only have 50% chance to win a round. Hence, we have only 0.003% to win the game - we are expected to gain the flag once in 33K games.

However, that is not the end. To make the challenge more interesting (or confusing), they tried to introduce a secret quantum coin which can be tossed and we will be automatically using the measured value as $P_1$. Poignant Pineapple has observed that the chances to win by tossing the coins (and do nothing else) is much higher - we are able to win once in about 10 games.

Part II: Wait, we are not prepared for a sequel!⌗

⏲️ ⏲️ Day 2, 10:20 (1580 minutes in) ⏲️ ⏲️

OOO decided to release a sequel of the challenge... They have increased the number of rounds from 30 to 128 and nothing else. Well, it is very likely that there is an unintended solution in the original challenge.

back-to-qoo (43 solves, 128 points)

You are pulled back to QOO again. QOO or OOO? Whatever.

back-to-qoo.challenges.ooo 5000Files: service.py, backend.py, coin.py, game.py, players.py

⏲️ ⏲️ Day 2, 10:25 (1585 minutes in) ⏲️ ⏲️

Shortly later, the bot told us that back-to-qoo is already solved.

Part III: A two-minute review on quantum mechanics⌗

Let's assume that there are four quantum numbers: $\lvert0\rangle$, $\lvert1\rangle$, $\lvert+\rangle$ and $\lvert-\rangle$. No some quantum logic gates:

- Hadamard gate $H$ -

qubit.H():

$H(\lvert0\rangle) = \lvert+\rangle$, $H(\lvert1\rangle) = \lvert-\rangle$, $H(\lvert+\rangle) = \lvert0\rangle$ and $H(\lvert-\rangle) = \lvert1\rangle$. - Measurement $M$ -

qubit.measure().

This is an operation to observe a qubit and return either 0 or 1. $M(\lvert0\rangle) = 0$ and $M(\lvert1\rangle) = 1$. However for $M(\lvert+\rangle)$ and $M(\lvert-\rangle)$, there is a 50% chance to return a 0 (otherwise a 1).

In reality, a superposition will be collapsed after it is measured. However, the non_destructive flag in .measure() stop the qubit from collapsing. Simply put, the qubit would not change (unlike what it usually does) after it is measured.

Part IV: Putting our guessing hats on⌗

⏲️ ⏲️ Day 2, 10:45 (1605 minutes in) ⏲️ ⏲️

We could only guess what's happening about the secret coin (which is a qubit) since we don't have its corresponding source code. Instead, let's look at the source code on how Zardus bets:

class Zardus(Player, SecretPlayer):

# ... omitted ...

def bet(self, gameid, referee): # referee = c2

qubit = self.qubits[gameid]

if referee == 1:

qubit.H()

res = qubit.measure(non_destructive=True)

qubit.H()

else:

res = qubit.measure(non_destructive=True)

self.bases.append(referee)

return res

# ... omitted ...After while, we assumed that Zardus is actually using the secret coin to collude with us. We also assumed that the secret coin is initially either $\lvert0\rangle$ or $\lvert1\rangle$.

Assume that $k\in\{0, 1\}$ and define $\lvert k \rangle$ be coin we and Zardus shared secretly. Denote $C_2$ be the bet of Zardus' opponent (which also serves as his referee) and $P_2$ be his bet. If $C_2 = 0$, then $P_2 = M(\lvert k\rangle) = k$. Otherwise, $P_2 = M(H(\lvert k\rangle))$ will be randomly picked from 0 or 1.

Below shows a sequence of $P_1$, $P_2$, $C_1$ and $C_2$'s in a game, where I am tossing the secret coin every time. If my guess is correct, then $C_2 = 0$ would imply $P_1 = P_2$ - and it actually holds! We are more convinced with the assumption now.

p1s = [0, 1, 1, 1, 0, 1, 1, 1, 0, 0, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 0, 1, 1, 1, 0]

p2s = [0, 1, 0, 1, 0, 1, 1, 1, 1, 0, 1, 0, 1, 0, 0, 1, 0, 0, 0, 1, 1, 0, 0, 1, 1, 0, 1, 0, 1, 0]

c1s = [0, 1, 1, 0, 0, 0, 1, 0, 1, 0, 0, 1, 1, 0, 0, 1, 0, 0, 1, 0, 1, 0, 0, 0, 1, 0, 0, 1, 1, 0]

c2s = [0, 0, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 1, 0, 0, 1, 1, 0, 0, 1, 1, 1, 1, 0, 0, 0, 0, 1, 0, 0]With that said, we actually have a 75% chance winning a round! Therefore we have around 10% to beat the game and win an encrypted flag from Zardus.

Part V: Why can't we brute force?⌗

⏲️ ⏲️ Day 2, 12:30 (1710 minutes in) ⏲️ ⏲️

Poignant Pineapple — Today 12:30

welp, i finished running the script, no flag

so our script must still be wrong?

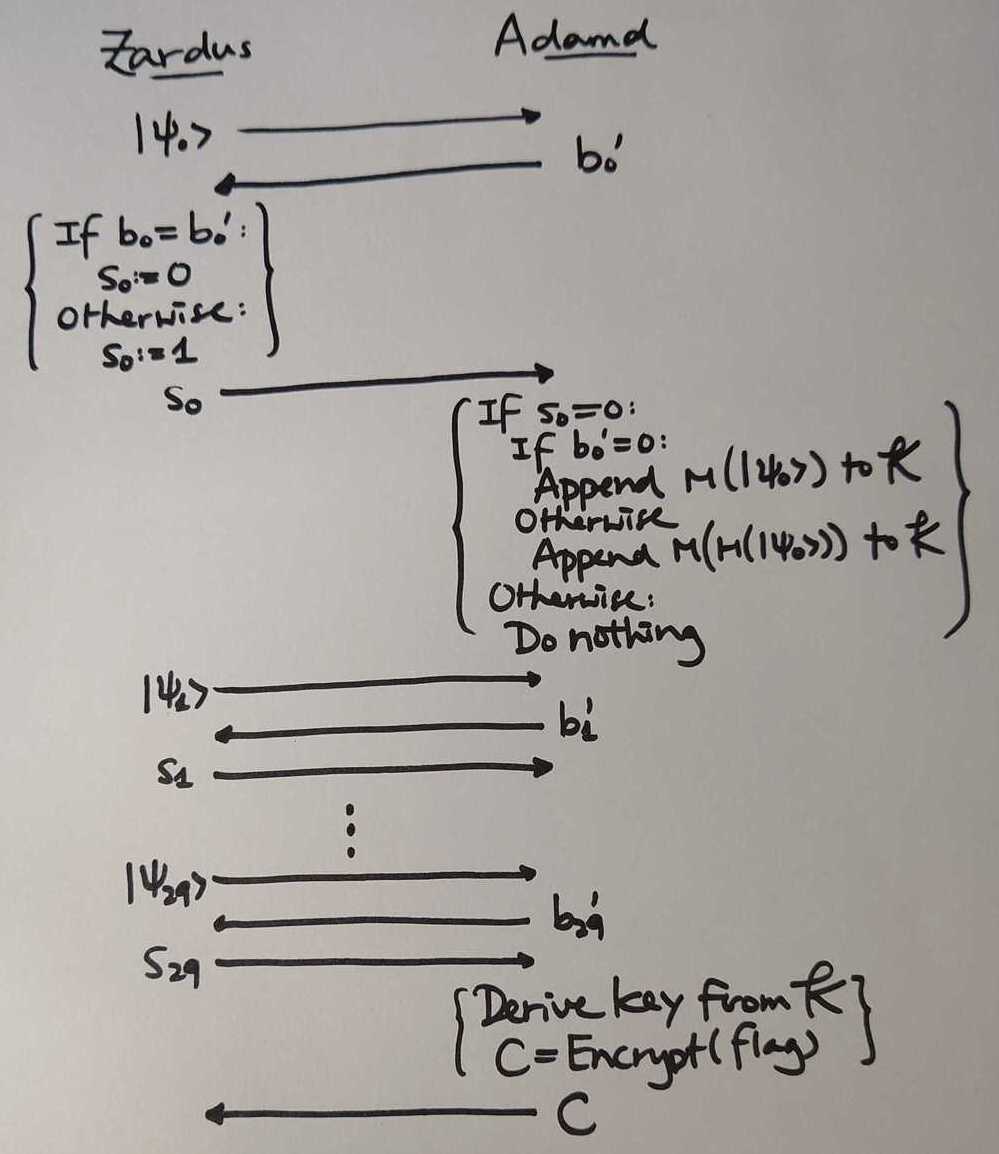

At the end of part I, we fix the script that tries to brute-force the entire key space (with 30 bit entropy) but in vain. Luckily I am able to spot out the other mistake we made - the entropy is not 30 bits long, but 15 bits in average. Here we introduce Adamd, the one who actually encrypts the flag and sent it to Zardus (and he leaked it to us - traitor!). Let's see how do Zardus and Adamd chat implemented in players.py.

⏲️ ⏲️ Day 2, 12:49 (1729 minutes in) ⏲️ ⏲️

Let $\lvert \psi_0 \rangle$, $\lvert \psi_1 \rangle$, ..., $\lvert \psi_{29} \rangle$ be the qubits shared between us and Zardus. The qubits $\lvert \psi_i\rangle$ and the basis $b_i := C_{2, i}$ is determined before the communication, while $b'_i \in \{0, 1\}$ is randomly selected by Adamd. Since one bit of entropy is added to $\mathcal{K}$ if $b_i = b'_i$, there are in average 15 bits added. Also, as we are already given $b_i$ and $b'_i$ for $0 \leq i < 30$, we actually knew the number of bits of the key entropy. We can exhaust the whole key space easily. Eventually we have the flag for qoo-or-ooo!

Flag: OOO{qoo_is_a_good_competitor_and_zardus_is_a_leaker}.

Part VI: We don't need to brute force at all!⌗

⏲️ ⏲️ Day 2, 13:29 (1769 minutes in) ⏲️ ⏲️

I extracted a set of p2s, c2s, bs and key that allow me to win the first flag. Hereby c2s and bs respectively stand for $\left(C_{2, 0}, C_{2, 1}, ..., C_{2, 29}\right)$ and $\left(b_0, b_1, ..., b_{29}\right)$, while p2s means $\left(M(\lvert \psi'_0\rangle), M(\lvert \psi'_1\rangle), ..., M(\lvert \psi'_{29}\rangle)\right)$ (where $\psi'_i = \psi_i$ if $C_{2, i} = 0$ and $\psi'_i = H(\psi_i)$ otherwise).

c2s = [0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 0]

bs = [1, 1, 0, 1, 1, 1, 1, 1, 0, 0, 0, 0, 1, 1, 1, 0, 1, 1, 1, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 1]

# * * * * * * * * * *

p2s = [1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 1, 0, 1, 0, 0, 1, 0, 1, 0, 0, 1, 1, 0, 0, 0, 1, 1, 1, 0, 0]

key = [ 1, 0, 0, 1, 1, 0, 0, 1, 1, 0 ]Wait... key is actually a subsequence of p2s, and they are picked if c2s = bs. This implies we don't need to exhaust the keys at all. Therefore, when the number of rounds is increased to 128, the only difficulty is to win the game. The probability of winning the game is decreased from 10% to 0.3%. Poignant Pineapple is repeatedly playing with Zardus and friends while I am writing a script to decrypt the incoming flag. We are able to win the game after 500 rounds and get the flag for back-to-qoo.

Flag: OOO{zardus_is_still_a_leaker_and_you_win_QOO_again}.

Part VII: Postmortem⌗

Turns out the quantum challenge is not something new. It composes of two parts:

- The 2-on-2 game we played is the CHSH Game1. There is an existing strategy that wins around 85%.

- The key for the communication is derived by the BB84 Key Distribution2. Since we have everything that is used as key exchange, we of course have the key.

Interestingly, we thought that OOO fixed the unintended solution for the second part. We do not know our approach on the first part is also unintended...

Challenge: pooow-buddy⌗

pooow-buddy (52 solves, 122 points)

Tired of POWs? Here's a helpful program to solve them for you!

pooow-buddy.challenges.ooo 5000Files: service

Part I: How do you turn this on?⌗

⏲️ ⏲️ Day 2, 13:45 (1785 minutes in) ⏲️ ⏲️

Although the challenge is not tagged with crypto, it attracts me because of its name. I made a challenge earlier called prooof-ooof-wooork that is intended to mimic OOO's challenge naming style. Of course, the challenges are totally different and irrelevant. This is how it looks when nc-ed:

mmmm mmmm mmmm # # #

mmmm m" "m m" "m m" "mm m #mmm m m mmm# mmm# m m

#" "# # # # # # #"m m m" #" "# # # #" "# #" "# "m m"

# # # # # # # # #m#m# # # # # # # # # #m#

##m#" #mm# #mm# #mm# # # ##m#" "mm"# "#m## "#m## "#

# m"

" ""

┌───────────────────────────────────────────────────────────────── MAIN MENU ─┐

│ │

│ > Dispatcher commands: │

│ │

│ start │

│ status │

│ stop WORKER_ID │

│ dispatch WORKER_ID WORKER_COMMAND │

│ poll │

│ add_challenge NUM_ZEROES CALLBACK_TYPE CALLBACK_ARG PREFIX │

│ quit │

│ │

│ > Worker commands: │

│ │

│ hash ITERATION CHALLENGE_SIZE CHALLENGE │

│ │

└─────────────────────────────────────────────────────────────────────────────┘

Dispatcher command> We are given few commands. Let's briefly explain what they are:

startstarts a new worker.statusshows the current status of the workers and the challenges.stopstops a worker with given id.dispatchassigns a proof-of-work task to a worker.pollpolls the workers.add_challengeadds a proof-of-work challenge. The callback will be called if they are able to find $x$ such that $\text{SHA256}(\text{prefix} + x)$ ends withNUM_ZEROESzero bits.

I was still confused even if I understood what the operations are. Let's go through an example. Suppose that we are going to find x such that SHA256("foo" + x) ends with 24 null bits. We can execute the below sequence of dispatcher commands:

start

add_challenge 24 echo done foo

dispatch 0 hash 100000000 7 foolishAfter a while, done foolish0000000096666529 is printed and we can confirm that its SHA256 digest ends with three null bytes.

hashlib.sha256(b'foolish0000000096666529').hexdigest()

# '2a2f08e8e58da14f8cfd7af0ac69fabca766631c4012729719add8a739000000'Part II: Command injection? Yes command injection!⌗

There are three CALLBACK_TYPEs they provided: echo, curl and exec. The binary actually wraps the callback into a system call - here's how:

echo CALLBACK_ARG PREFIX:echo {CALLBACK_ARG} {PREFIX}{SOLUTION}curl CALLBACK_ARG PREFIX:curl http://{CALLBACK_ARG}?pow={PREFIX}{SOLUTION}exec CALLBACK_ARG PREFIX:./{CALLBACK_ARG} {PREFIX}{SOLUTION}

Unfortunately, the callback argument is not vulnerable to command injection. Since there are quite a number of teammates looking at the challenge, I decide to move to smart-cryptooo.

⏲️ ⏲️ Day 2, 19:32 (2132 minutes in) ⏲️ ⏲️

publicqi found that it is possible to perform command injection using the PREFIX parameter when we dispatch tasks to the workers.

start

add_challenge 1 echo A c

dispatch 0 hash 131313131313 21 c;cat${IFS}flag${IFS}Flag: OOO{thread safety is not a lie}

Challenge: smart-cryptooo⌗

smart-cryptooo (5 solves, 343 points)

Existing encryption schemes are just too dumb. OOO is here to change that!

Files: https___oooverflow.io_philosophy.html-secret.enc, anc.py

Part I: Machine learning, how?⌗

⏲️ ⏲️ Day 2, 17:09 (1989 minutes in) ⏲️ ⏲️

There are two files provided by the challenge. The .enc file is an encrypted OOO philosophy, with the flag hidden in the middle, with the cryptosystem $\mathcal{C}$. On the other hand, anc.py is a script that trains three neural networks:

- Alice is the encryption network that takes message $m$ and key $k$ and returns a ciphertext $c$,

- Bob is the decryption network that takes ciphertext $c$ and key $k$ and returns a message $m$, and

- Eve is the eavesdropper network that takes only the ciphertext $c$ and returns a message $m$.

Note that $m, c, k \in \mathbb{[-1, 1]}^{64}$, where $m$ and $k$ are 8 bytes. To convert a 8-byte message block to $\mathbb{R}^{64}$, it is first encoded to a 64-bit array and replace those 0s to -1. On the other hand, each entry of $c=(c_1, c_2, ..., c_{64})$ is packed as a 64-bit floating point number. Hence, a 8-byte plaintext will be encrypted to a 512-byte ciphertext.

9shiba noticed that ANC is short for adversarial neural cryptography3 and found a reference implementation4 that look alike to the Python file given. To summarize, the objective for the training process is to generate two models Alice and Bob such that they could encrypt and decrypt correct, while Eve is unable to decrypt without the key. However, we are not even given the model. What can we do?

Part II: Some observations and attempts⌗

Recovering the parameters?⌗

⏲️ ⏲️ Day 2, 20:17 (2177 minutes in) ⏲️ ⏲️

With the summary function, we are able to see what is happening between the layers. 17K parameters are trained and most of the parameters come from the dense layer. I think recovering those parameters are not difficult. Well... I don't even know where to begin.

alice_model.summary()

# Model: "alice"

# __________________________________________________________________________________________________

# Layer (type) Output Shape Param # Connected to

# ==================================================================================================

# input_1 (InputLayer) [(None, 64)] 0

# __________________________________________________________________________________________________

# input_2 (InputLayer) [(None, 64)] 0

# __________________________________________________________________________________________________

# concatenate (Concatenate) (None, 128) 0 input_1[0][0]

# input_2[0][0]

# __________________________________________________________________________________________________

# dense (Dense) (None, 128) 16512 concatenate[0][0]

# __________________________________________________________________________________________________

# activation (Activation) (None, 128) 0 dense[0][0]

# __________________________________________________________________________________________________

# reshape (Reshape) (None, 128, 1) 0 activation[0][0]

# __________________________________________________________________________________________________

# conv1d (Conv1D) (None, 128, 2) 10 reshape[0][0]

# __________________________________________________________________________________________________

# activation_1 (Activation) (None, 128, 2) 0 conv1d[0][0]

# __________________________________________________________________________________________________

# conv1d_1 (Conv1D) (None, 64, 4) 20 activation_1[0][0]

# __________________________________________________________________________________________________

# activation_2 (Activation) (None, 64, 4) 0 conv1d_1[0][0]

# __________________________________________________________________________________________________

# conv1d_2 (Conv1D) (None, 64, 4) 20 activation_2[0][0]

# __________________________________________________________________________________________________

# activation_3 (Activation) (None, 64, 4) 0 conv1d_2[0][0]

# __________________________________________________________________________________________________

# conv1d_3 (Conv1D) (None, 64, 1) 5 activation_3[0][0]

# __________________________________________________________________________________________________

# activation_4 (Activation) (None, 64, 1) 0 conv1d_3[0][0]

# __________________________________________________________________________________________________

# flatten (Flatten) (None, 64) 0 activation_4[0][0]

# ==================================================================================================

# Total params: 16,567

# Trainable params: 16,567

# Non-trainable params: 0Similar ciphertexts?⌗

⏲️ ⏲️ Day 2, 20:53 (2213 minutes in) ⏲️ ⏲️

Let's talk about the mode of operation specifically for the challenge. Suppose that we are going to encrypt $n$ message blocks: $M_1, M_2, ..., M_n$. Let $K_1$ be the initial key. The first chunk of 16 blocks $M_1, M_2, ..., M_{16}$ will be encrypted with $k_1$. If there are more to encrypt, 8 bytes will be randomly generated as $K_2$ (the next key). $K_2$ will be encrypted by $K_1$ and appended to the ciphertext. After that, encrypt the next chunk of blocks $M_{17}, M_{18}, ..., M_{32}$ with $K_2$ and generate $K_3$ as the next key. This is repeated until there are no more to encrypt.

We have some plaintext-ciphertext pairs since we knew they are encrypting their philosophy. I was trying to find two ciphertexts in the same chunk that looked alike (by measuring their distances). Can we approximate the neural network as an affine transformation?

Biased ciphertexts?⌗

⏲️ ⏲️ Day 2, 21:08 (2228 minutes in) ⏲️ ⏲️

The last layer would be an activation layer that performs a tanh, their output would lie between $[-1, 1]$. However, we also observed the output is very biased. Here are some statistics for the ciphertext:

- Only 1K (out of 210K) numbers are positive, and

- The minimum and maximum are respectively -0.66 and 0.11.

We had a hypothesis about the bias, but it seems that this is not helpful recovering the message...

Part III: Postmortem⌗

Well... We are out of ideas. After the contest, I discussed with Vergissmeinnicht and he said that the ciphertext can be approximated as an affine relation to the plaintext. I'll try it out later...

- Stephen DiAdamo, Janis Nötzel (2019) "CHSH Game"

https://tqsd.github.io/QuNetSim/examples/chsh.html ↩ - Charles H. Bennett, Gilles Brassard (1984) "Quantum Cryptography: Public Key Distribution and Coin Tossing"

https://researcher.watson.ibm.com/researcher/files/us-bennetc/BB84highest.pdf ↩ - Mart ́ın Abadi and David G. Andersen (2016) "Learning to Protect Communications with Adversarial Neural Cryptography"

https://mathybit.github.io/assets/docs/csai/Google_adv_neural_crypto.pdf ↩ - Adrian Pacurar (2018) "Adversarial Neural Cryptography"

https://mathybit.github.io/adversarial-neural-crypto/ ↩