ångstromCTF 2021: Cache Money

I played ångstromCTF 2021 for @blackb6a to spend my Easter holiday. I solved most of the reverse and cryptography challenges alone. In particular, Cache Money is one of the harder crypto challenges that I spent more than one day dealing with. It is very rewarding, and eventually four teams ended up solving it.

Challenge Summary⌗

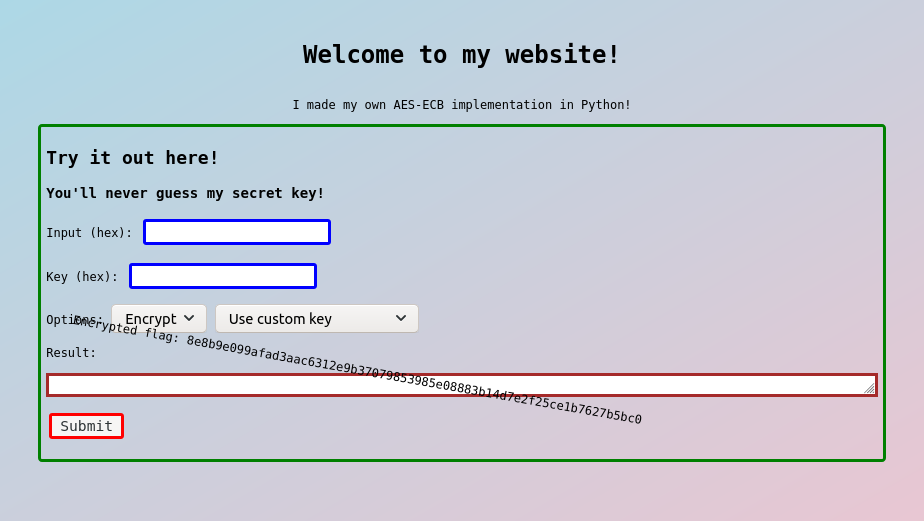

This challenge reimplements the Advanced Encryption Standard (AES) on 128, 192 and 256-bit keys. The encryptor is equipped with caches and we are given a service to encrypt (or decrypt) our messages. In short, there are four oracles provided by the service ($k_0$ is the fixed secret key and $b \in \{128, 192, 256\}$).

- $\mathcal{E}(m, k)$ encrypts a message $m$ with $k$,

- $\mathcal{D}(c, k)$ decrypts a ciphertext $c$ with $k$,

- $\mathcal{E}_0(m, b)$ encrypts a message $m$ with the first $b$ bits of $k_0$, are

- $\mathcal{D}_0(c, b)$ decrypts a ciphertext $c$ with the first $b$ bits of $k_0$.

Apart from the ciphertext (resp. plaintext), each oracle returns also the amount of time, in nanoseconds, used for encryption (resp. decryption).

Additionally, there is an flag $m_0$ encrypted with the $k_0$ under AES-256. The objective is of course decrypt and retrieve the flag. Needless to mention, they had measures to avoid the flag being retrieved by calling $\mathcal{D}_0(c_0, 256)$ directly. At least I am not awared of a way to solve this without recovering the key.

Solution⌗

Part I: What is that cache?⌗

The caching strategy⌗

From the challenge, there is a Rijndael class that encrypts and decrypts messages. All of the functions inside the class are decorated with @instance_method_cache and they will call the cache method defined below:

def instance_method_cache(f):

# lru_cache uses the same cache for every instance which kinda sucks

# so i made my own cache implementation

def wrapper(self, *args):

key = (f.__name__, *args)

try:

return self.cache[key][0]

except TypeError:

# deal with unhashable arguments

return f(self, *args)

except KeyError:

# remove the least recently used element

# dont want the cache blowing up

while len(self.cache.keys()) >= 300:

mintimestamp = timestamp()

keys = list(self.cache.keys())

lowest = -1

for i in range(len(keys)):

if self.cache[keys[i]][1] < mintimestamp:

mintimestamp = self.cache[keys[i]][1]

lowest = i

else:

continue

del self.cache[keys[lowest]]

ret = f(self, *args)

self.cache[key] = (ret, timestamp())

return ret

return wrapperSuppose we want to call $\mathcal{F}(x)$. This is how the caching works:

- If $(\mathcal{F}, x)$ is cached, return the cached value of $\mathcal{F}(x)$.

- Drop the oldest entry from the cache until there are less than 300 entries.

- Compute $y := \mathcal{F}(x)$, cache $y$ as the value to $(\mathcal{F}, x)$ and return it.

Caching in action: Encrypting a dummy flag with a dummy key⌗

I added a hook to log the entries and see how the function calls are triggered:

- self.cache[key] = (ret, timestamp())

+ t2 = timestamp()

+ val = (ret, t2)

+ self.cache[key] = val

+ print(f'[+] self.cache[{key}] = {val} (time spent: {t2 - t1})')This is a transcript that encrypts actf{this_is_a_test_flag} with the key being Tes3UeYGqoq6vatPmlM6uQrTA7OTLNP8. There are two parts:

- (Lines 1-369) Initializes the

Rijndaelclass with the key. - (Lines 375-5690) Encrypts the message.

For the oracles, only second part will be timed.

Part II: Obviously a timing attack, but how?⌗

It takes around 0.7 seconds to encrypt a random block of message under AES-256. A costy operation would be MixColumns - it is called 13 times and each contributes ~6% to the total encryption time (hence around 85% in total). Unfortunately, it is not feasible to read cached MixColumns for the timing attack. Here's why:

- Suppose that $m_1, m_2, ..., m_{13}$ are the inputs for

MixColumns. It is not likely to find $m_i = m_j$ for some distinct pairs $(i, j)$. - The time fluctuates a lot and it is not reliable measuring a 6% difference.

Well, I think timing attack is difficult solely with the second point. There are many function calls triggered by a single encrypt_block, including:

- ~1.8K

Addcalls,- 15 of them add two 4×4 matrices (~5ms each),

- 60 of them add two array of 4 entries (~1ms each),

- the remainder adds two numbers (~0.2ms each).

- ~380

Multiplycalls (~1ms each), - ~350

Doublecalls (~0.2ms each), - 56

SubWordcalls (~0.2ms each), - 14

ShiftRowscalls (~0.2ms each), and - 13

MixColumnscalls (~40ms each).

That is a lot of uncertainty. It would be much better if we are able to determine whether a single Add operation is cached... Is it even possible?

I had no idea. The challenge author released a hint few hours later:

Hint 1: This is also partially a web challenge...look for something you can exploit to make things more consistent.

There must be something fishy being a web challenge. Let's look at the source code for the encryption endpoint:

@instance_method_cache

def encrypt(self, m):

try:

return b"".join(

self.encrypt_block(m[i:][:16]) for i in range(0, len(m), 16)

)

except:

return b"Error"

@app.route("/api/enc", methods=["POST"])

def encrypt():

m = tuple(request.json["a"])

# Does not pad if the length of message is a multiple of 16

if len(m) % 16 != 0:

m = m + (0,) * (16 - (len(m) % 16))

if request.json["secret"] not in ["secret128", "secret192", "secret256"]:

k = tuple(request.json["k"])

else:

# Takes the first n bytes of the secret if we are using k0 for encryption.

k = key[

: {"secret128": 16, "secret192": 24, "secret256": 32}[

request.json["secret"]

]

]

try:

cipher = Rijndael(k)

except ValueError:

return jsonify({"result": "Invalid key", "time": 0})

start = timer()

enc = cipher.encrypt(m)

end = timer()

del cipher

return jsonify({"result": enc.hex(), "time": end - start})Note that if we are encrypting the message block actf{this_is_a_t with $k_0$ under AES-256, then this is the JSON object we send:

// Request

{

"a": [97, 99, 116, 102, 123, 116, 104, 105, 115, 95, 105, 115, 95, 97, 95, 116],

"secret": "secret256"

}

// Response

{

"result": "83696d00a4e4def3a9ca1c3e61b899cb",

"time": 144329411

}What if we send a malformed a? The exception is raised in a lower level function and is handled by encrypt(self, m). It is good to see the time measured.

{"a": [[]], "secret": "secret256"} // Request

{"result": "4572726f72", "time": 45599} // Response ("4572726f72" is Error hexed)Currently we are measuring nothing, since exception is raised before the first function call is completed. Let's throw after the first function call:

{"a": [1337, []], "secret": "secret256"} // Request

{"result": "4572726f72", "time": 189082} // ResponseBy throwing errors in an early stage, the time differences are more significant than the fluctuations now. Nice!

Part III: Leaking one eighth of the key⌗

A drop in response time⌗

As mentioned above, the first 369 lines from my transcript is where the Rijndael instance being initialized. Lines 1-55 is the log for computing the round constants rcon. Lines 56-369 represents how the round keys are computed, and this is part of the transcript:

56:[+] self.cache[('RotWord', (76, 78, 80, 56))] = ((78, 80, 56, 76), 505349375647612, 8617) (time spent: 8617)

57:[+] self.cache[('SubWord', (78, 80, 56, 76))] = ((47, 83, 7, 41), 505349375732640, 14356) (time spent: 14356)

58:[+] self.cache[('Add', 47, 1)] = (46, 505349375825251, 9545) (time spent: 9545)

59:[+] self.cache[('Add', 83, 0)] = (83, 505349375902264, 9931) (time spent: 9931)

60:[+] self.cache[('Add', 7, 0)] = (7, 505349375978725, 9218) (time spent: 9218)

61:[+] self.cache[('Add', 41, 0)] = (41, 505349376055553, 9776) (time spent: 9776)

62:[+] self.cache[('Add', (47, 83, 7, 41), (1, 0, 0, 0))] = ((46, 83, 7, 41), 505349376123267, 320106) (time spent: 320106)

63:[+] self.cache[('Add', 84, 46)] = (122, 505349376211788, 7671) (time spent: 7671)

64:[+] self.cache[('Add', 101, 83)] = (54, 505349376306808, 11562) (time spent: 11562)

65:[+] self.cache[('Add', 115, 7)] = (116, 505349376404211, 12700) (time spent: 12700)

66:[+] self.cache[('Add', 51, 41)] = (26, 505349376486636, 9646) (time spent: 9646)

67:[+] self.cache[('Add', (84, 101, 115, 51), (46, 83, 7, 41))] = ((122, 54, 116, 26), 505349376555581, 361347) (time spent: 361347)

68:[+] self.cache[('Add', 85, 122)] = (47, 505349376655242, 16317) (time spent: 16317)

69:[+] self.cache[('Add', 101, 54)] = (83, 505349376735919, 10524) (time spent: 10524)

...AES-128 and AES-192 have less than 300 function calls computing the round keys, thus every single step taken for round keys is cached. This is not the case for AES-256. Therefore, we should attempt to leak something from $k_0$ via $\mathcal{E}_0(m, 128)$ or $\mathcal{E}_0(m, 192)$. To achieve this, let's try to make use of the error-throwing strategy we discussed in the last part.

{"a": [1337, []], "secret": "secret128"} // Request

{"result": "4572726f72", "time": 159767} // ResponseIterating 0 <= a[0] < 256, it is observed the request a[0] = 40 is particularly faster than the others.

{"a": [40, []], "secret": "secret128"} // Request

{"result": "4572726f72", "time": 40721} // ResponseWhat is this? Why is it faster?⌗

In short, Add(???, 40) is cached... But what is that? To understand why, look at the constructor and the encrypt_block method:

def encrypt_block(self, block):

m = tuple(tuple(block[4 * i :][:4]) for i in range(4))

m = self.Add(self.roundkeys[:4], m)

# ... omitted ...This is how the methods called during encrypt_block, in order:

encrypt_block(block)

-> [... Computes m <- block ...]

-> Add(roundkeys[:4], m)

-> Add(roundkeys[0], m[0])

-> Add(roundkeys[0][0], m[0][0]) [... 💰 Uses the cache ...]

-> Add(roundkeys[0][1], m[0][1]) [... 🔥 Throws an error ... ]

[... The subsequent functions are not called ...]

-> Add(roundkeys[0][2], m[0][2])

-> Add(roundkeys[0][3], m[0][3])

-> Add(roundkeys[1], m[1])

-> ...Therefore, that Add(???, 40) cached is actually Add(roundkeys[0][0], m[0][0]). With the same strategy, we can find a[1], a[2] and a[3] that the API calls has a smaller response time: 67, 82, 56.

{"a": [40, 67, 82, 56, []], "secret": "secret128"} // Request

{"result": "4572726f72", "time": 40665} // ResponseWhat does (40, 67, 82, 56) mean? From the cache's perspective, the function call Add(roundkeys[0], (40, 67, 82, 56)) is cached. But why is it cached? Looking back at the constructor, this is executed when the round keys are computed:

Add(w[0], Add(SubWord(RotWord(w[3])), rcon[1]))Hereby w[0] and w[3] are respectively roundkeys[0] and roundkeys[3], while rcon[1] == (1, 0, 0, 0). That said, it is very likely that (40, 67, 82, 56) is being the second argument, i.e.:

Add(SubWord(RotWord(roundkeys[3])), rcon[1]) == (40, 67, 82, 56)

# eqivalently...

sbox(roundkeys[3][1]) ^ 1 == 40

sbox(roundkeys[3][2]) == 67

sbox(roundkeys[3][3]) == 82

sbox(roundkeys[3][0]) == 56

# and equivalently...

roundkeys[3][1] == 76

roundkeys[3][2] == 100

roundkeys[3][3] == 72

roundkeys[3][0] == 118On top of that, roundkeys[3] == key[12:16]. Therefore we have recovered four bytes of $k_0$. We can further recover the first eight bytes of $k_0$ by testing a[4], a[5], ..., a[11]. Since we have 12 bytes of the key, we can exhaust to recover the secret key used for AES-128 (i.e., key[0:16])

The idea can be used when dealing with AES-192. We are able to recover key[20:24] by finding an appropriate input for a[0], a[1], a[2] and a[3], and eventually key[0:24] by brute-forcing the remaining bytes.

Part IV: The cache is not in reach for AES-256!⌗

Unfortunately, we cannot generalize the idea to recover keys under AES-256 because there are more than 300 function calls for computing round keys. Hence, the cache for Add(roundkeys[0][0], m[0][0]) and others have already expired prior than they are used. Only the round keys for the later rounds are cached. With that said, we can use $\mathcal{D}_0(c, 256)$ instead of $\mathcal{E}_0(m, 256)$ and recover the round keys generated near-end. Specifically they are roundkeys[49] == a[0:4], roundkeys[50] == a[4:8] and roundkeys[51] == a[8:12].

Since we have roundkeys[0], roundkeys[1], ..., roundkeys[5], we can retrieve key[24:32] using Z3. Eventually we found key == b'fGsOY9Xb4Eq5vLdHzDGbJ4QvQqOCQcYm' and could decrypt the flag: actf{if_you_cache_my_drift}. First 🩸!

Part V: Attacks without time-reading⌗

In short, one can send a float to determine if an item is cached. One could check if Add(roundkeys[0][0], 1337) is cached by sending the below JSON object:

{"a": [1337.0] "secret": "secret128"} // RequestIt happens that an error is thrown if and only if Add(roundkeys[0][0], 1337) is not cached. The cached output will be returned if Add(roundkeys[0][0], 1337) is cached, since ("Add", roundkeys[0][0], 1337) == ("Add", roundkeys[0][0], 1337.0). However, on the other hand, it will attempt to compute roundkeys[0][0] ^ 1337.0. This throws an error since xorring an integer with a float is not a defined behaviour.